Shredders

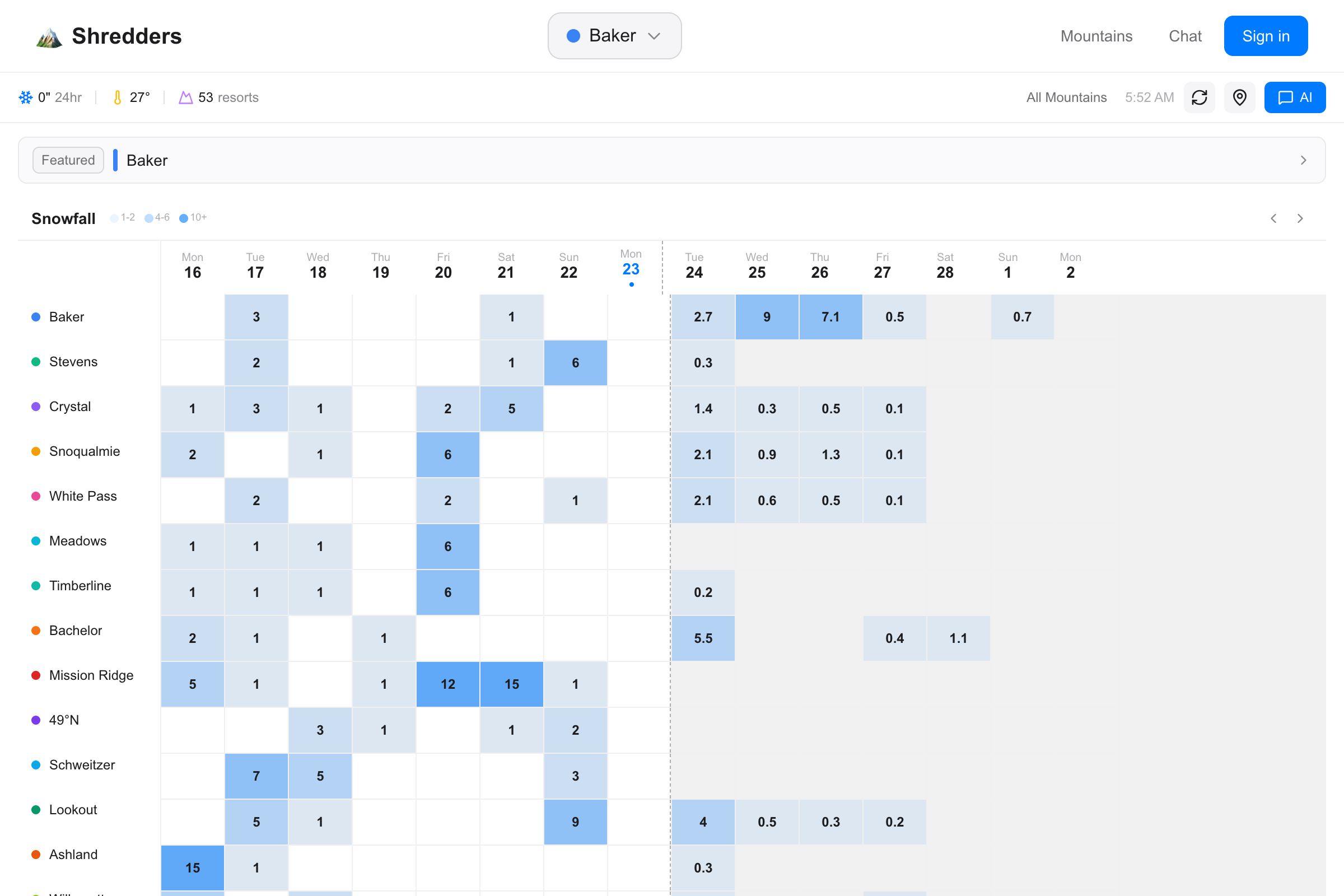

Real-time powder conditions for 53 ski resorts across 11 regions.

53 resorts · 11 regions · 90 API routes · 24 database tables · 7 data sources

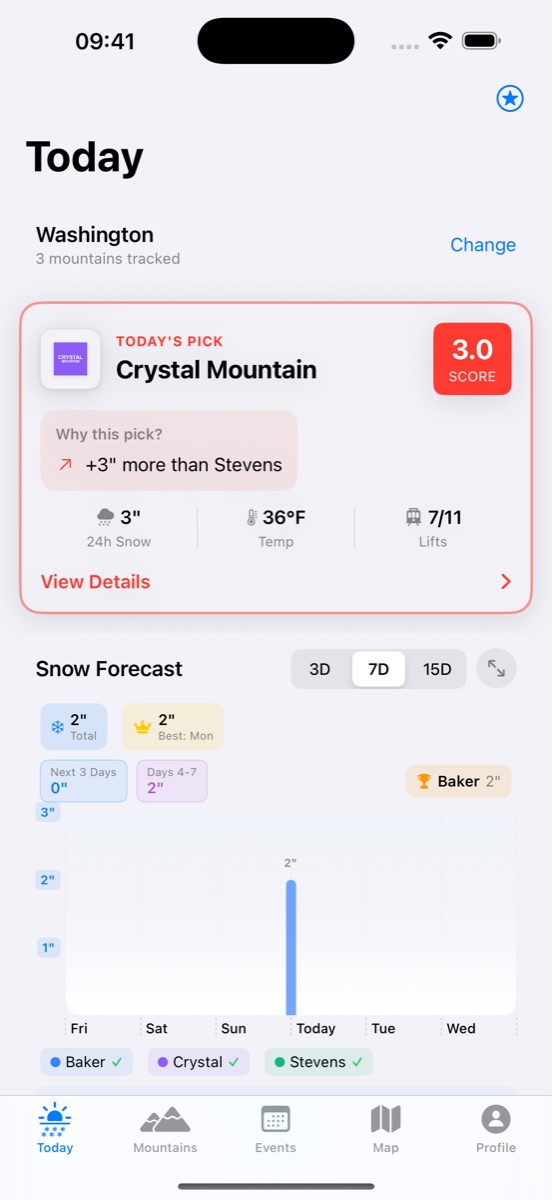

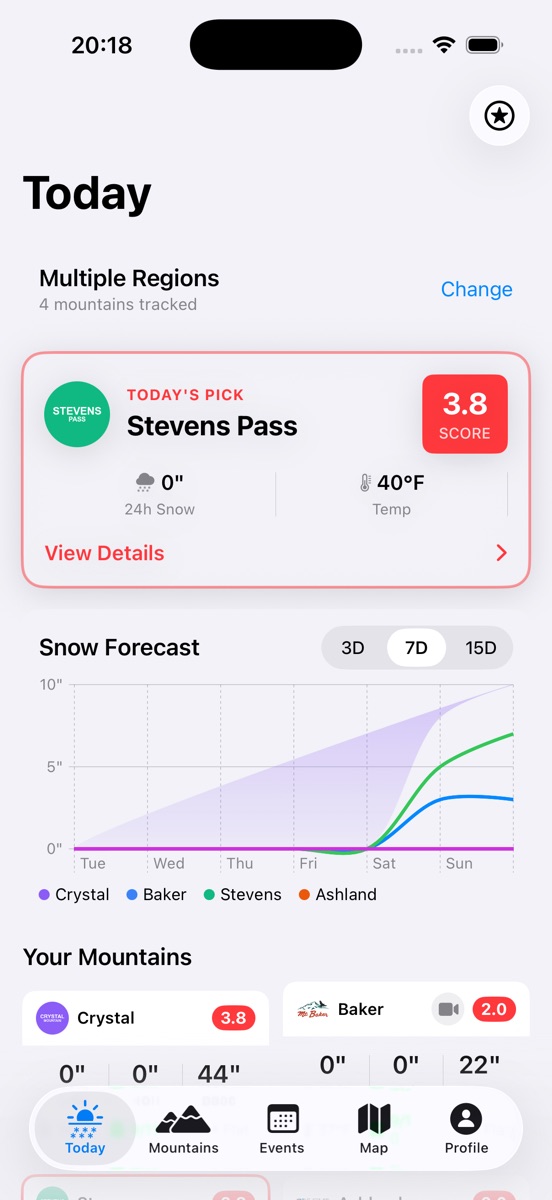

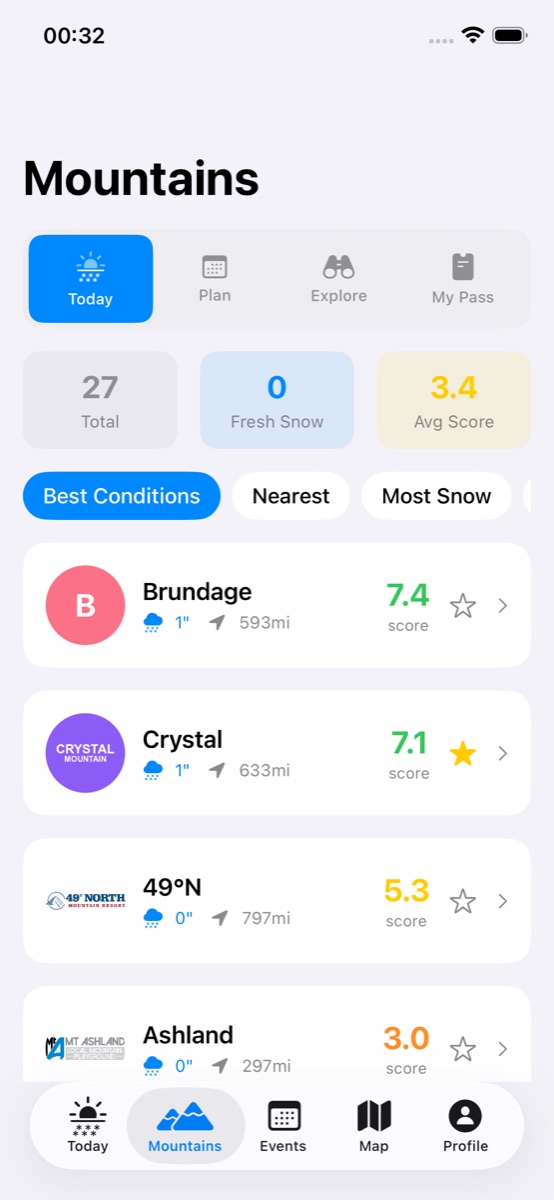

iOS app — Today's forecast and Mountain rankings

The Problem

Planning a ski day in the Pacific Northwest means checking five different websites. SNOTEL for snowpack. NOAA for the forecast. WSDOT for road conditions. The resort's webcam page. Your group chat for who's actually going.

By the time you've assembled the picture, your motivation to get up at 5 AM has evaporated.

Shredders aggregates seven data sources into one dashboard — web and iOS — with an 8-factor powder score, 7-day forecasts, weather alerts, AI chat for trip planning, and social features for coordinating with friends.

Architecture

NOAA / Weather.gov

Open-Meteo

WSDOT

RainViewer

Resort websites

Puppeteer (JS-rendered)

REST APIs

Cron schedules

24 tables · 14 migrations

Upstash Redis cache

Claude chat (streaming)

GPT-4 predictions

iOS (SwiftUI)

Push notifications

The backend is a Next.js 16 app with 90 API routes organized across mountains (batch conditions, forecasts, powder score, alerts, webcams, lifts, parking, trip advice), auth (email + Apple Sign-In), events (CRUD, RSVP, carpool, recurring series), social (check-ins, likes, photos), and AI chat. Three Vercel cron jobs handle daily event reminders, weather alerts, and powder notifications.

The database is Supabase PostgreSQL with 24 tables spanning users, events (with attendees, comments, photos, activity feeds, date polls, invite tokens), social features, push tokens, alert subscriptions, sessions, audit logs, mountain status, and scraper health monitoring.

The Powder Score

An 8-factor composite score from 0–100: recent snowfall (24h/48h/7d), temperature at elevation, wind and gusts, visibility, current conditions, upcoming snow in the forecast, base depth, and seasonal trends. Designed to answer one question: is it worth the drive?

Performance

The original frontend made 45+ API calls on page load. Batch endpoints collapsed that to 3. Response caching at 10-minute intervals keeps Supabase query volume manageable. The iOS app launches with 2 API calls. Images are served as AVIF/WebP with lazy loading.

Decisions

Why Supabase over Firebase?

I needed real-time subscriptions, auth, and a relational database in one platform — without managing infrastructure for a side project. Supabase gave me Postgres (real SQL, not document queries), built-in auth with Apple Sign-In support, and auto-generated APIs. The tradeoff: those auto-generated APIs are fast to build against but you lose fine-grained control over query optimization when you hit 90 endpoints.

Why scrape resort websites?

No resort provides a usable API. Lift status, webcam URLs, parking lot capacity — it all lives on PHP pages last redesigned in 2014. Cheerio handles HTML parsing and Puppeteer covers JavaScript-rendered content. The downside is fragility: every time Crystal Mountain redesigns their snow report, a scraper breaks. I monitor scraper health with dedicated database tables and cron-based health checks.

Why dual platform from day one?

Ski conditions are checked at 5 AM from bed while deciding whether to make the drive. That's a phone experience. The web app is better for planning — bigger screen, map view, event coordination — but the iOS app is where the real usage happens. SwiftUI made it fast to build, and push notifications for powder alerts were table stakes.

Why 90 API routes?

Each route does one thing and is simple to reason about. I chose many small endpoints over fewer, more complex ones because it let me move fast — add a feature, add a route. The tradeoff is maintenance surface area. Refactoring touches a lot of files. Batch endpoints for the frontend helped offset the chattiness.

Tradeoffs

Scraping is inherently fragile. Resort websites change without notice. I've built monitoring to catch failures fast, but a broken scraper still means missing data until I fix the parser. There's no way around this without official APIs.

Free tier constraints shape the architecture. Supabase and Vercel free tiers are generous but not infinite. Redis caching via Upstash keeps Supabase query volume under control. Batch API endpoints keep Vercel serverless invocations reasonable. If usage grew 10x, I'd need paid tiers or a different hosting strategy.

Dual auth adds complexity for a side project. Supporting both email/password and Apple Sign-In means JWT rotation, token blacklisting, session tracking, and rate limiting across two flows. It's table stakes for an iOS app but it's more auth code than most side projects warrant.